By Kawsar Ahmmed | SEO Algorithm Recovery Expert | Updated March 2026

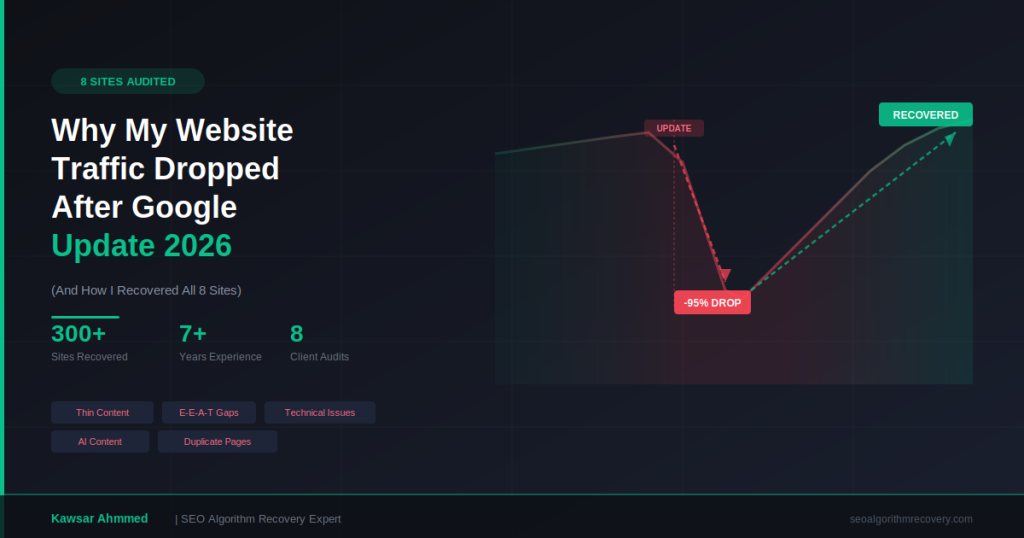

If your website traffic dropped after a Google update in 2026, you are not alone. Over the past 12 months, I have personally audited 8 client websites that lost anywhere from 40% to 95% of their organic traffic following core updates, helpful content updates, and spam updates.

This is not a generic advice article. Everything below is based on real Search Console data, Semrush reports, and Screaming Frog crawl results from the 8 sites I diagnosed and worked on. I will walk you through exactly what went wrong, what patterns I found across all of them, and how you can start diagnosing your own traffic drop today.

If you need hands-on help right now, you can skip ahead and book a free diagnosis call. But if you want to understand why your rankings vanished and whether recovery is realistic, keep reading.

Lost traffic after a Google update? I have recovered 300+ sites. Book your free diagnosis call.

Real Traffic Drop Data From 8 Client Sites I Audited

Before I explain patterns and solutions, let me show you the scale of the problem. Here are the 8 websites I audited, each hit by a different combination of Google algorithm updates between 2024 and 2026.

FreedomCare

FreedomCare is a U.S. home healthcare services provider with state-level landing pages across the country. After a core update, impressions climbed to 5.45M over 16 months but clicks collapsed. The CTR sat at just 1.5% with an average position of 26.8. The site was generating visibility but failing to convert it into actual visits.

Traffic Drop Causes:

- Hit by a combination of core update, helpful content update, and accumulated technical issues

- Thin content on state-level landing pages with boilerplate text repeated across dozens of pages

- Missing heading tags and improper on-page structure site-wide

- No multimedia (images, video, infographics) on key service pages

- Weak E-E-A-T signals with no visible author credentials or expert-backed content

- Outbound links not set to nofollow, leaking authority to external sites

Recovery Process:

- Rewrite every state landing page with unique, keyword-researched content (minimum 1,300-1,500 words per page)

- Add proper heading tag hierarchy (H1, H2, H3) across all pages

- Embed relevant multimedia including images, charts, and video to improve engagement signals

- Build E-E-A-T by adding author bios with verifiable healthcare credentials

- Fix outbound link attributes to nofollow where appropriate

- Implement content freshness updates on a scheduled basis

CanadaNewsMedia

This Canadian news aggregation site was hit directly by a March algorithm update. Both Search Console and Semrush data confirmed a sharp traffic decline aligned with the update rollout.

Traffic Drop Causes:

- Content model relied heavily on aggregated and thinly rewritten articles from other sources

- Textbook target for Google’s helpful content classifier due to lack of original reporting

- No unique editorial value, expert commentary, or first-hand sourcing

- Site-wide content quality signal dragging down even stronger pages

Recovery Process:

- Shift from aggregation to original editorial content with unique analysis and local sourcing

- Remove or consolidate the weakest aggregated articles to raise site-wide content quality

- Add bylines with journalist credentials and author pages

- Build topical authority in specific news verticals rather than covering everything broadly

ATLTranslate

A translation services company hit by the January 2024 core update. Traffic dropped severely. The audit revealed a critical structural flaw with their subdomain content strategy.

Traffic Drop Causes:

- January 2024 core update directly impacted the site’s organic rankings

- AI-generated content published on a subdomain (ai.atltranslate.com) that Google refused to index

- Subdomain strategy was diluting the main domain’s authority while producing zero search value

- Most subdomain posts were marked noindex by Google, providing no SEO benefit

Recovery Process:

- Migrate valuable subdomain content to the main domain after quality review and human editing

- Remove or noindex low-quality AI-generated pages that add no unique value

- Consolidate domain authority under a single domain structure

- Rebuild content with human-written, expert-level translation industry articles

- Strengthen on-page SEO across all indexed service pages

HairStudioRichmond

A local hair salon in Richmond, Texas with almost no digital footprint beyond its website. For a service-area business in a visually-driven industry, the lack of platform presence made it nearly impossible to compete.

Traffic Drop Causes:

- Almost zero brand presence outside the website itself

- Invisible on Pinterest despite being in a highly visual industry

- Only 4 YouTube videos with minimal views and no consistent posting

- Missing consistent NAP (Name, Address, Phone) data across local directories

- No local citations, Google Business Profile optimization, or review strategy

Recovery Process:

- Build out Google Business Profile with complete service categories, photos, and regular posts

- Create Pinterest boards showcasing hairstyles, transformations, and salon work

- Launch a YouTube content strategy with tutorials, transformations, and client testimonials

- Submit NAP data to top local directories for citation consistency

- Implement local schema markup on the website

- Encourage and respond to Google reviews to build local trust signals

BlueSkyHotelEforie

A Romanian hotel website hit by the helpful content update. The entire site was underperforming due to foundational SEO gaps across content and technical layers.

Traffic Drop Causes:

- Homepage had extremely thin content with no real keyword targeting

- Full site lacked on-page SEO optimization (titles, metas, headers)

- Tagging and indexing issues preventing proper crawlability

- No local SEO strategy for the Eforie tourism market

- Hit specifically by the helpful content update targeting low-value pages

Recovery Process:

- Rewrite homepage with comprehensive, keyword-targeted content about the hotel and Eforie area

- Conduct local keyword research specific to the Romanian tourism and hotel market

- Fix all tagging and indexing issues to ensure proper page crawlability

- Implement full on-page SEO across every room, amenity, and location page

- Build local SEO presence with Romanian travel directories and Google Business Profile

- Recover from helpful content update by demonstrating genuine local expertise on every page

MukPolice

A niche informational site that experienced a significant traffic decline following recent core update patterns.

Traffic Drop Causes:

- Content quality gaps across informational pages with shallow coverage

- Technical crawlability problems limiting Google’s ability to index key pages

- Alignment with core update patterns targeting sites with thin informational content

Recovery Process:

- Audit and expand thin informational pages with deeper, more comprehensive content

- Fix crawlability issues to ensure Google can access and index all important pages

- Add E-E-A-T signals including author credentials and editorial standards

- Improve internal linking structure to distribute authority to priority pages

PartsOfAmerica

One of the hardest-hit sites in the group. Traffic had dropped almost to zero. The problems were extensive and layered across content, technical, and guideline compliance.

Traffic Drop Causes:

- Algorithm update hit compounded by pre-existing site-wide quality issues

- Bulk-published content with minimal originality across hundreds of pages

- Near-duplicate articles copied from a sister site (PartsOfCanada.com)

- No proper on-page SEO or technical SEO implemented anywhere on the site

- Not following Google’s webmaster guidelines on any meaningful level

Recovery Process:

- Remove or consolidate all duplicate and near-duplicate content across both domains

- Follow Google guidelines by rewriting remaining content to be unique and genuinely useful

- Implement proper on-page optimization (title tags, meta descriptions, heading structure)

- Fix technical SEO foundation including crawlability, indexation, and site architecture

- Build brand authority and domain trust through original content and legitimate link building

- Establish clear content differentiation between this site and the sister domain

Asturpins.com

A Spanish custom pins e-commerce site with 42 total Screaming Frog issues. This was a textbook case of accumulated technical debt dragging down organic performance.

Traffic Drop Causes:

- Canonicalization warnings on 22 URLs (10.78% of the site) confusing Google’s indexer

- Internal server errors on 8 pages returning 5xx status codes

- Noindex directives on 3 pages that should have been indexed

- Missing page title on a key page

- Pagination URL issues breaking product category navigation

- Pages without internal links (orphan pages invisible to crawlers)

- Multiple H1 tags on 124 pages (60.78% of the site)

- Oversized images on 109 pages hurting page load speed

- Low-content pages on 101 URLs (49.51%) flagged as thin by Google

Recovery Process:

- Fix all canonicalization issues to point Google to the correct preferred URLs

- Resolve server errors and ensure all product pages return 200 status codes

- Remove incorrect noindex tags from pages that need to rank

- Add unique, optimized page titles and single H1 tags to every page

- Repair pagination to ensure proper crawling of product category pages

- Build internal links to orphan pages so crawlers and users can reach them

- Compress and optimize all images to improve Core Web Vitals scores

- Expand thin product pages with unique descriptions, specs, and use-case content

Common Patterns I Found Across All 8 Sites

After completing all 8 audits, clear patterns emerged. These are not theoretical guesses. They are the recurring issues I documented with data from Semrush, Search Console, and Screaming Frog across every single project.

1. Thin and Low-Value Content

Seven out of eight sites had significant thin content problems. Pages with fewer than 300 words, boilerplate text repeated across dozens of landing pages, and content that answered no real user question. FreedomCare’s state pages and BlueSkyHotel’s homepage were prime examples. Google’s helpful content system is specifically designed to demote this type of content at a site-wide level.

2. AI-Generated or Duplicate Content

ATLTranslate was publishing AI content on a subdomain that Google refused to index. PartsOfAmerica had bulk-published articles that were near-copies of a sister site. CanadaNewsMedia relied on aggregated rewrites. In each case, Google’s systems identified the content as providing little to no unique value. The 2024-2026 updates have become increasingly aggressive at detecting and demoting content that exists purely for search engine visibility rather than genuine user benefit. Meanwhile, platforms like Bing are now using AI citations to surface original sources directly, which further punishes sites that recycle existing content instead of creating something original.

3. E-E-A-T Gaps

Experience, Expertise, Authoritativeness, and Trustworthiness gaps appeared across almost every audit. FreedomCare needed expert-authored healthcare content with clear author bios and credentials. HairStudioRichmond lacked any cross-platform authority signals. Several sites had no author pages, no about page with verifiable credentials, and no external signals of topical authority. For YMYL (Your Money Your Life) topics especially, E-E-A-T deficiencies are a direct ranking factor.

4. Technical SEO Failures

Asturpins had 42 crawl issues including broken canonicals, server errors, and missing page titles. FreedomCare had no proper heading tag structure. ATLTranslate’s subdomain content was not being indexed. BlueSkyHotel had tagging and indexing issues. Technical problems alone rarely cause traffic drops after algorithm updates, but they amplify the damage. A site with weak content and broken technical foundations gives Google every reason to demote it.

5. Missing Multimedia and Poor User Experience

FreedomCare pages lacked images, videos, or any visual elements. HairStudioRichmond had almost no YouTube or social media presence for a visually-driven industry. Multiple sites had oversized, unoptimized images that hurt page speed. Modern SEO performance depends on content that engages users across formats, not just text walls.

Step-by-Step Diagnosis Framework Using Google Search Console

If your website traffic dropped after a Google update in 2026, here is the exact process I use with every client to pinpoint the cause before making any changes.

Step 1: Confirm the Timing

Open Google Search Console and go to Performance > Search Results. Set the date range to the last 16 months. Look for the exact date your clicks and impressions started declining. Then cross-reference that date with Google’s official algorithm update history. I built a free Google algorithm update tracker that logs every confirmed rollout with dates and durations, which makes this step much faster. If the drop aligns with a confirmed update, you are likely dealing with an algorithmic issue rather than a technical one.

Step 2: Identify Which Pages Lost Traffic

In Search Console, go to the Pages tab. Compare the last 3 months against the previous 3 months. Sort by clicks (descending) and look for pages that had significant traffic before the update but dropped to near-zero after. Export this data. These are your priority pages for analysis.

Step 3: Analyze the Lost Keywords

Switch to the Queries tab with the same comparison dates. Identify which keywords dropped in average position. If you see keywords that moved from page 1 (positions 1-10) to page 2 or beyond, those represent your biggest recovery opportunities. Cross-check these against Semrush or Ahrefs to see if competitors gained those positions.

Step 4: Run a Technical Crawl

Use Screaming Frog or a similar crawler to audit your entire site. Look for the issues I found across my 8 audits: broken canonicals, noindex tags on important pages, missing H1s or duplicate H1s, server errors, orphan pages, and oversized images. Technical issues compound the damage from content-quality problems.

Step 5: Evaluate Content Quality Page by Page

For every page that lost traffic, ask these questions. Does this page provide a complete answer to the user’s query? Does it offer something unique that competitors do not? Is there a real author with demonstrable expertise? Does the page include supporting media like images, charts, or video? Is the word count competitive with the pages currently ranking for this keyword? If the answer to most of these is no, the page needs a full content overhaul, not minor tweaks.

Realistic Recovery Timelines (Not Hype)

One thing I tell every client upfront: algorithm recovery is not instant. Anyone promising overnight results is not being honest with you.

Based on my experience across 300+ recovery projects, here is what realistic timelines look like.

For sites hit by a single core update with mostly content-quality issues, expect 4 to 8 weeks for initial improvements after implementing changes. Full recovery often takes 2 to 3 update cycles, meaning 3 to 6 months. I covered the specific tactics that have worked for Google March 2026 core update recovery in a separate guide if you want the full breakdown.

For sites with combined issues (content + technical + E-E-A-T gaps), the timeline extends to 6 to 12 months. This was the case for sites like PartsOfAmerica and FreedomCare in my audit group, where the problems were layered and interconnected. Sites that also lost Google Discover traffic face a slightly different recovery path, which I detailed in my February 2026 Discover core update recovery guide.

For sites with manual actions or severe spam penalties, recovery requires a formal reconsideration request after fixes, and Google’s review process adds additional weeks.

The key factor in recovery speed is not just fixing problems. It is demonstrating to Google over time that your site has genuinely improved in quality, relevance, and user value.

Why These Findings Matter for Your Site?

The patterns I documented across these 8 audits are not unique to these businesses. If your website traffic dropped after a Google update in 2026, there is a high probability that your site shares at least two or three of the same issues: thin content, weak E-E-A-T signals, technical debt, or duplicate/AI-generated content.

The difference between sites that recover and sites that do not comes down to one thing: a systematic, data-driven approach to diagnosis and implementation. Random fixes do not work. Publishing more content on a broken foundation does not work. You need to identify the specific algorithmic signals your site is failing on and address them methodically.

I have published the full audit methodology and findings for all 8 client websites in my SEO algorithm recovery audit report. If you want to see the raw data, screenshots, and specific recommendations for each site, the full report is available there.

Ready to Diagnose Your Traffic Drop?

If your website traffic dropped after a recent Google update and you want expert help identifying the cause, I offer a free preliminary diagnosis call. I will review your Search Console data, identify which update likely impacted your site, and outline the recovery path before you commit to anything.

Frequently Asked Questions

Why did my website traffic drop after a Google update in 2026?

Google’s 2026 core updates, helpful content updates, and spam updates all re-evaluate site quality. The most common reasons for traffic loss include thin or AI-generated content, missing E-E-A-T signals, technical SEO issues like broken canonicals and indexing errors, and duplicate content. The specific update that hit your site determines the recovery approach.

How long does it take to recover from a Google algorithm update?

For content-quality issues tied to a single core update, initial improvements typically appear within 4 to 8 weeks after changes are implemented. Full recovery often takes 2 to 3 update cycles (3 to 6 months). Sites with layered problems across content, technical, and E-E-A-T areas may need 6 to 12 months.

Can I recover my traffic without hiring an SEO expert?

It depends on the severity and complexity of the issues. If your site was hit by a single update and the problems are straightforward (such as thin content on a few pages), you can follow the diagnosis framework in this article to start fixing things yourself. For sites with multiple overlapping issues, especially in competitive or YMYL niches, professional diagnosis significantly improves both the accuracy of the fix and the speed of recovery.

What tools do I need to diagnose a Google algorithm traffic drop?

At a minimum, you need Google Search Console (free) to identify timing and lost pages/keywords. Screaming Frog (free for up to 500 URLs) handles technical audits. For competitive analysis and backlink review, Semrush or Ahrefs are the industry standard. I use all four in every audit.

Is my site penalized or just demoted by an algorithm update?

Check Google Search Console under Security & Manual Actions. If you see a manual action listed, your site has a penalty that requires a formal reconsideration request. If there is no manual action, but your traffic dropped after a confirmed update, your site was likely affected algorithmically. Algorithmic demotions do not require a reconsideration request but do require substantive improvements to recover.