Scaled content abuse is the single biggest enforcement target in Google search right now. The March 2026 core update made that unmistakably clear. Sites publishing hundreds or thousands of AI-generated, template-driven, or programmatically created pages without genuine editorial value saw traffic drops between 50% and 90% within two weeks of the rollout.

I have worked on more than 300 algorithm recovery projects over the past seven years, and scaled content penalties are now the most common issue landing in my audit queue. This guide breaks down exactly what Google classifies as scaled content abuse, how to audit your own site for risk, and the step-by-step triage framework I use to recover affected websites.

What Google Classifies as Scaled Content Abuse?

Scaled content abuse is not about AI. It is not about automation. It is about manipulation at scale. Google defines it as generating many pages for the primary purpose of manipulating search rankings, with little or no value added for users. The production method is irrelevant. Hand-written thin content at scale triggers the same enforcement as mass AI output.

Google rebranded its older policy against spammy auto-generated content in March 2024, replacing it with this broader, method-agnostic definition. The March 2026 core update then applied aggressive enforcement against three specific patterns.

| What is scaled content abuse? Scaled content abuse occurs when a website publishes large volumes of pages primarily to manipulate search rankings rather than serve users. Google enforces this policy regardless of whether the content was created by AI, templates, human writers, or programmatic tools. The penalty is for the behavior pattern, not the production method. |

The three patterns that drove most March 2026 penalties:

| Pattern | What It Looks Like | Example |

| Mass AI page generation | 50-500 articles published daily, identical structure, no editorial review, no first-hand experience | AI-written how-to articles across thousands of keyword variations with no original insight |

| Template-with-variable substitution | Hundreds of near-identical pages differing only in city name or product variable | “Plumber in [City]” pages repeated across 500 locations with no unique local data |

| Aggregator scraping without added value | Content scraped from feeds, search results, or other sites with no original context | Product roundups or news summaries stitched from external sources with no expert analysis |

The critical distinction here is intent versus method. A local business directory with 10,000 pages can rank well if each page offers verified, unique local data. A site with just 50 thin template pages can get penalized. Google’s SpamBrain system evaluates content quality signals at the page and site level, and it does not count pages to determine a threshold. It evaluates whether the content adds genuine value.

If your site was hit by a Google spam update and you suspect scaled content was the trigger, the audit process below will help you confirm the diagnosis.

How to Audit Your Site for Scaled Content Risk?

Before you remove a single page, you need a clear picture of what Google is likely flagging. I run this audit on every scaled content recovery case, and it consistently surfaces the pages dragging down domain-level quality signals.

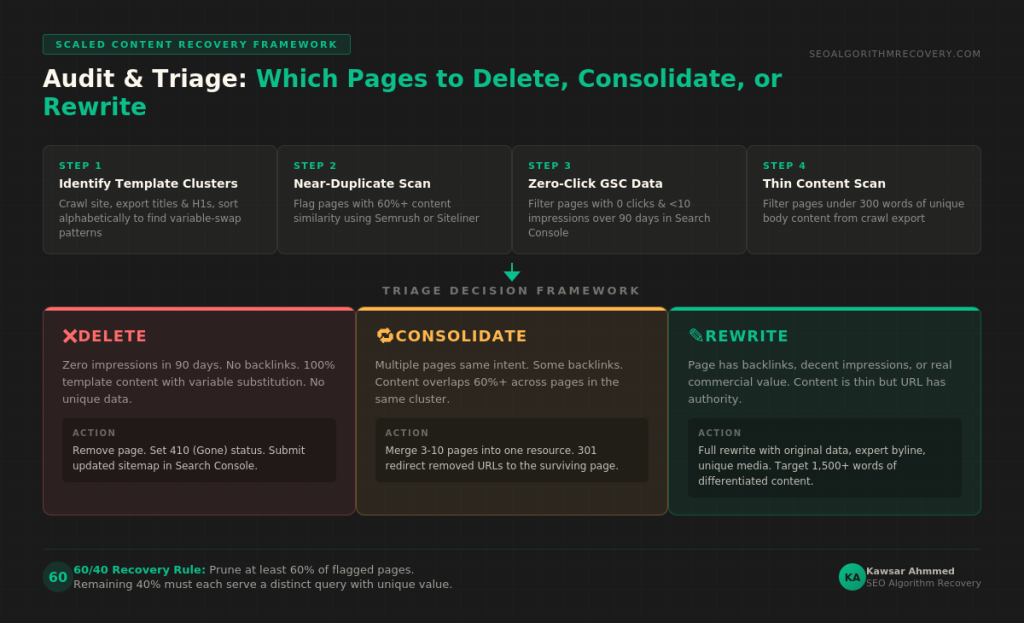

Step 1: Identify Template Page Clusters

Open Screaming Frog and crawl your full site. Export the title tags and H1 tags, then sort alphabetically. Template pages reveal themselves immediately because they share identical structures with only one or two variables swapped. A site I audited in February 2026 had 1,200 service pages where the only difference was the city name inserted into the title, H1, and two body paragraphs. Every page scored near-zero in Search Console performance.

Step 2: Check for Near-Duplicate Content

Use Semrush or Siteliner to run a duplicate content scan. Flag any pages sharing more than 60% content similarity. These are exactly the pages Google’s algorithms cluster together as scaled abuse. In recovery projects, I consistently find that the worst offenders are product roundup pages and location landing pages that were generated from a single template with minimal differentiation.

Step 3: Pull Search Console Zero-Click Data

Go to Google Search Console, filter by Pages, and sort by impressions descending. Any indexed page with zero clicks and fewer than 10 impressions over 90 days is a candidate for removal or consolidation. These pages are indexed but delivering no user value, which is exactly the signal Google uses to identify scaled abuse patterns.

Step 4: Run a Thin Content Word Count Scan

Export your Screaming Frog crawl data and filter for pages with fewer than 300 words of unique body content. Word count alone is not a penalty signal, but when combined with template structure and low engagement metrics, it confirms the pattern. Sites hit by traffic drops after a Google update almost always have a large cluster of thin, template-driven pages pulling down the entire domain.

Triage Framework: Delete, Consolidate, or Rewrite

Not every flagged page needs to be deleted. The goal is to remove manipulative patterns while preserving pages that can deliver genuine value with improvement. I use a three-tier triage system that has produced consistent recovery results across dozens of scaled content cases.

| Action | Criteria | How to Execute |

| DELETE | Zero impressions in 90 days. No backlinks. Content is 100% template with variable substitution. No unique data or expert insight. | Remove the page entirely. Set a 410 (Gone) status code. Do not redirect to another thin page. Submit the updated sitemap in Search Console. |

| CONSOLIDATE | Multiple pages targeting the same intent. Some have backlinks or moderate impressions. Content overlaps by 60%+ across pages. | Merge the best content from 3-10 pages into one comprehensive resource. 301 redirect the removed URLs to the consolidated page. Ensure the surviving page has unique depth. |

| REWRITE | Page has existing backlinks, decent impressions, or covers a topic with real commercial value. Content is thin but the URL has authority. | Completely rewrite with original data, expert perspective, and first-hand experience. Add author byline, unique media, and structured data. Target 1,500+ words of differentiated content. |

The most common mistake I see in recovery attempts is trying to salvage every page with light edits. Swapping a few sentences or adding 200 words to a template page does not change the underlying pattern. Google’s systems evaluate the scaled nature of the content across your entire site. If you keep 80% of your template pages and lightly edit them, the pattern persists, and recovery stalls. This is why the next section matters.

How Much Content to Prune Before Google Re-Evaluates?

Based on data from my recovery cases, sites that remove or significantly overhaul at least 60% of their flagged scaled content pages during the first round of cleanup tend to see positive movement at the next core update evaluation. Sites that prune only 20-30% of problematic pages typically do not recover until a second or third round of deeper cuts.

| The 60/40 Recovery Rule To trigger a meaningful re-evaluation from Google’s core systems, aim to prune, consolidate, or completely rewrite at least 60% of flagged scaled content pages. The remaining 40% should only survive if each page demonstrably serves a distinct user query with unique, expert-level content. |

I want to be direct about something. There is no magic number that guarantees recovery. Every site is different, and Google does not publish a threshold. The 60/40 benchmark comes from patterns I have observed across scaled content recovery projects in 2025 and 2026. Treat it as a starting guideline, not a guarantee.

After pruning, do not re-index the deleted URLs. Use the URL Removal tool in Search Console for high-priority pages, and let Googlebot naturally discover the 410 status codes on the rest. Force-fetching deleted pages can slow down the crawl budget reallocation you need for your surviving quality content.

Sites recovering from expired domain abuse penalties face a similar pruning calculus, although the E-E-A-T remediation component is typically more intensive because the domain’s topical history does not match the current content.

Recovery Timeline and How to Monitor Progress in Search Console

Scaled content abuse penalties applied through core updates do not resolve through a reconsideration request. There is no manual action notification in Search Console for algorithmic enforcement. Recovery happens when Google re-crawls your cleaned-up site, and the next core update re-evaluates your domain quality signals.

| Timeframe | What to Expect |

| Week 1-2 | Complete your content audit and triage. Execute deletions and 410 status codes. Submit updated sitemaps. |

| Week 3-4 | Begin rewrites on surviving pages. Add author bylines, unique data, and structured markup. Monitor crawl stats for de-indexation of removed pages. |

| Week 5-8 | Consolidation phase. Merge related thin pages into comprehensive resources. Build internal links from quality pages to rewritten content. |

| Next core update | This is when algorithmic recovery typically becomes visible. Google runs core updates roughly every 2-3 months. Full traffic restoration can take 1-2 update cycles depending on severity. |

How to track recovery in Google Search Console:

Open the Performance report and set the date comparison to the period before and after your cleanup. Focus on two metrics: total indexed pages (which should decrease as pruned content drops out) and average position on surviving pages (which should gradually improve). If you see impressions rising on your remaining pages within 4-6 weeks, that is a strong early indicator that the cleanup is working.

Also check the Pages report under Indexing. Watch for a decrease in “Crawled, currently not indexed” and “Discovered, currently not indexed” pages. When Google stops trying to crawl your removed thin pages and focuses crawl budget on your quality content, recovery accelerates.

Understanding the difference between manual actions and algorithm penalties is essential here. Scaled content enforcement through core updates follows a different recovery path than a manual spam action, which requires a formal reconsideration request.

Can Programmatic SEO Still Work After the Crackdown?

Yes, but only with genuine data differentiation. The March 2026 enforcement targeted scaled content abuse, not programmatic SEO as a discipline. Sites built on unique, structured data, such as directories with verified business listings, comparison tools with live pricing feeds, or travel platforms with real inventory, continued to rank normally through the update.

The test for any programmatic page is straightforward. Does this page answer a distinct user query that no other page on my site already answers? Does it contain information a user cannot find by visiting another page in this same cluster? If the answer to either question is no, the page is a liability. According to analysis by Google’s spam policy documentation, the focus remains on whether content was generated to manipulate rankings rather than to serve genuine user needs.

For ecommerce sites, this means product pages need unique descriptions, original photography, and differentiated specifications. Running your post-update SEO audit should include a specific check for template-driven product pages that share more than 60% content similarity with other pages on the same site.

Why E-E-A-T Signals Cannot Be Manufactured at Scale?

One pattern that stood out in March 2026 recovery data is that sites with strong E-E-A-T signals (demonstrable first-hand experience, named authors with verifiable credentials, and expert analysis) survived the update even when they had large content volumes. The sites that collapsed were the ones where content was produced at a speed that made genuine expertise impossible.

You cannot add authentic experience to 500 pages in a week. That is precisely why Google uses scaled content patterns as a spam signal. If your recovery plan includes adding author bylines and “expert quotes” to template pages without actually involving subject matter experts, you are treating the symptom, not the disease. Recovery efforts that work consistently address the content quality at a fundamental level, not just the surface-level trust signals.

Understanding how to recover from traffic lost to AI Overviews also ties into this discussion, because Google increasingly prioritizes content with original perspectives that AI summaries cannot easily replicate. Sites built on scaled, undifferentiated content are especially vulnerable to losing visibility in AI-generated search results.

Frequently Asked Questions

Does Google penalize all AI-generated content as scaled content abuse?

No. Google’s policy targets content created to manipulate rankings at scale, regardless of production method. AI-generated content that provides genuine value, demonstrates expertise, and passes editorial review is treated the same as human-written content. The penalty is for the behavior pattern of mass-producing thin content, not for using AI tools.

How long does recovery from a scaled content abuse penalty take?

Recovery from algorithmically applied scaled content penalties typically requires 1-2 core update cycles, which means roughly 2-6 months depending on when the next core update rolls out and how thoroughly you address the underlying issues. Sites that execute aggressive pruning and quality improvements in the first two weeks tend to recover faster.

Should I delete all my programmatic pages to recover?

Not necessarily. Pages with unique, verified data that serve distinct user queries can and should be preserved. The triage framework above helps you decide which pages to delete, which to consolidate, and which to rewrite. Blanket deletion can actually hurt recovery if you remove pages that had genuine authority and backlinks.

Will a manual action appear in Search Console for scaled content abuse?

Scaled content enforcement through core updates does not generate a manual action notification. Your rankings simply drop when the algorithm re-evaluates your site. If you receive a manual action specifically mentioning spam, that is a separate enforcement mechanism with its own reconsideration request process.

Can I just noindex the thin pages instead of deleting them?

Noindexing removes pages from the index but does not eliminate the crawl burden or send the same clear signal as a 410 status code. For scaled content recovery, I recommend using 410 (Gone) for pages you want permanently removed. This tells Googlebot definitively that the page no longer exists and should not be re-crawled.

If you are unsure whether your traffic loss was caused by scaled content abuse, a spam penalty, or a broader site reputation abuse issue, a professional audit can help pinpoint the exact cause. My full algorithm recovery audit report walks through the diagnostic methodology I use across every recovery engagement.

For the latest version of Google’s official enforcement criteria, review the Google Search spam policies documentation. Industry analysis from Search Engine Journal’s policy breakdown also provides useful context on how the scaled content definition has evolved since 2024.

| Published hundreds of AI-generated or template pages and lost rankings? Get a free scaled content risk assessment from Kawsar Ahmmed. Request Your Free Audit Now |